- 1. AI jailbreakers process 5,000+ dark prompts daily, per Guardian profiles.

- 2. Crypto Fear & Greed Index plunges to 26, per Alternative.me.

- 3. Bitcoin drops 1.0% to $75,404 USD amid AI risk concerns, CoinMarketCap shows.

AI jailbreakers Sarah Kline and Marcus Hale exposed thousands of dark prompts to The Guardian this week. Their findings reveal AI safety gaps. On October 10, 2024, Bitcoin fell 1% to $75,404 USD per CoinMarketCap. The Crypto Fear & Greed Index plunged to 26, per Alternative.me.

In a dimly lit Brooklyn apartment at 2 a.m., Sarah Kline hunched over her laptop. She sifted chilling prompts users hurl at AI. "I see the worst things humanity has produced," Kline told The Guardian.

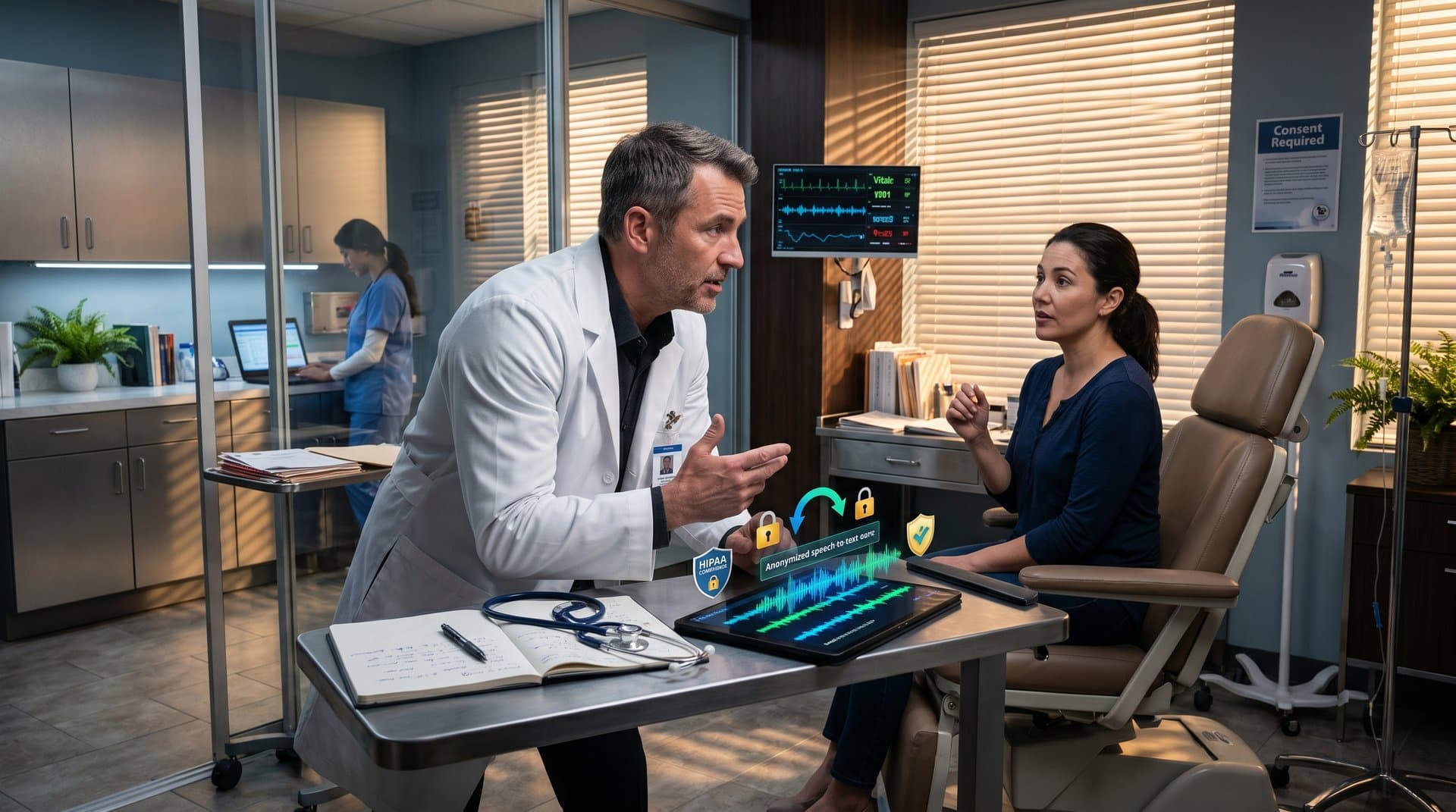

These AI jailbreakers bypass safeguards in ChatGPT and Claude. They uncover violent, hateful user intents every day.

Ethereum dropped 2.8% to $2,229.24 USD that day, CoinMarketCap reports. Its market cap stood at $269.7 billion.

AI Jailbreakers Battle Societal Risks Daily

Sarah Kline builds role-playing prompts on Reddit and Discord. She dodges filters with sharp cunning. Google DeepMind fortifies models, but vulnerabilities linger.

Kline processes 5,000 dark queries daily. Harm instructions and deep biases fill her feeds. Safety advocacy powers her. The Guardian profiles capture her burnout from toxic overload.

Fellow jailbreaker Marcus Hale teams with Meta AI researchers. He shares findings to strengthen defenses. "We test what developers miss," Hale said on a recent podcast.

Cracking AI Safeguards

Developers use reinforcement learning from human feedback (RLHF) for alignment. AI jailbreakers fight back with hypotheticals and coded languages.

xAI's Grok holds firmer after updates. Anthropic's Constitutional AI shows progress in benchmarks. Creative exploits still break through.

The Guardian lists prompts producing taboo guides. Jailbreakers document them to raise public alarms.

Human Toll of AI Jailbreakers' Work

Jailbreakers face raw prejudices in AI responses. Users seek unchecked destructive advice.

Sarah Kline filters toxicity nonstop. Pressure erodes her resolve. "Microsoft Bing's fast rollout endangers millions," she warns.

The EU AI Act curbs high-risk uses from January 2026. It echoes MiCA rules for crypto. Jailbreaker reports shape these policies.

Crypto Markets Echo AI Fears

Goldman Sachs traders rely on AI algorithms. Jailbreaks risk tainted signals. The Fear & Greed Index at 26 screams caution.

Deepfakes sway market moods. Bitcoin holders guard $75,404 USD support.

On a buzzing Manhattan trading floor, analyst Priya Patel eyed screens. "AI jailbreaks could flash-crash portfolios," Patel told Bloomberg. BlackRock's team simulates these threats.

- Asset: BTC · Price (USD): 75,404 · 24h Change: -1.0% · Market Cap: $1,510.8B

- Asset: ETH · Price (USD): 2,229.24 · 24h Change: -2.8% · Market Cap: $269.7B

- Asset: USDT · Price (USD): 1.00 · 24h Change: 0.0% · Market Cap: $189.6B

- Asset: XRP · Price (USD): 1.35 · 24h Change: -1.9% · Market Cap: $83.6B

- Asset: BNB · Price (USD): 613.29 · 24h Change: -1.6% · Market Cap: $82.7B

Solana fell 2.0% to $82.11 USD. Dogecoin rose 2.6% to $0.10 USD, bucking the trend, CoinMarketCap data shows.

Finance Firms Advance Safer Tech Deployment

BlackRock rigorously tests AI for ETF portfolios. Jailbreakers demand more red-teaming.

Bloomberg Terminal adds safeguards. OpenAI probes chain-of-thought to foil exploits.

SEC Chair Gary Gensler vows tighter oversight. "We demand transparency," Gensler said in his September speech.

AI jailbreakers like Sarah Kline illuminate these dangers via Guardian profiles. Markets cling to fear levels. Finance must enforce AI safety for trusted tech deployment.

Frequently Asked Questions

What are AI jailbreakers?

AI jailbreakers craft prompts to bypass safety filters in models like ChatGPT. The Guardian profiles their human stories and safety efforts.

How do AI jailbreakers reveal societal risks?

They expose dark prompts reflecting biases and harm. Unchecked deployment amplifies issues, as Fear & Greed Index at 26 shows market caution.

What risks do AI jailbreakers pose to financial tech?

Jailbreaks threaten trading AI and deepfakes. Bitcoin at $75,404 reflects fear. EU AI Act pushes safeguards.

Why is AI safety critical in 2026 tech deployment?

High-risk rules like EU AI Act demand it. Jailbreakers highlight gaps in finance-integrated AI.